KB ID 0000318

Problem

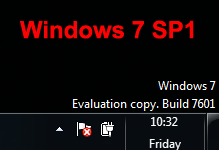

A while ago I wrote an article on how to remove the 7600 watermark. Then I noticed this bad boy had appeared, the culprit for my watermarks reappearance is the Windows 7 SP1 (beta) that I’m running. (Update 11/01/10 – this also occurs with SP1 Release Candidate)

Unlike previously, a simple “Bcdedit.exe -set TESTSIGNING OFF” command DOES NOT WORK.

Note: I am running a fully licensed and activated version of Windows 7, this watermark is only telling you you have SP1 beta installed!

Solution

1. Download this software.(Author credited below).

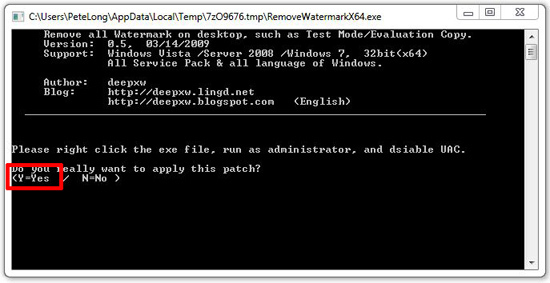

2. Extract that Zip file then run either RemoveWatermarkx86.exe or RemoveWatermarkx64.exe (Depending on your version of Windows 7).

3. Press “Y” for Yes.

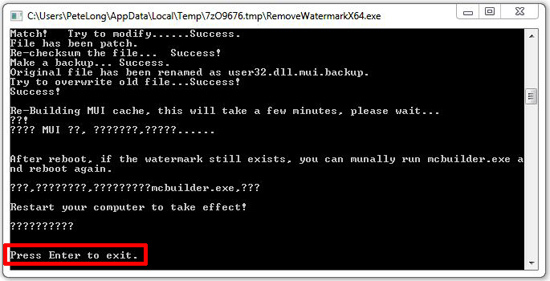

4. It can take a few minutes and look like it hung – don’t panic! Eventually it will say “Press Enter to Exit” > Reboot your machine.

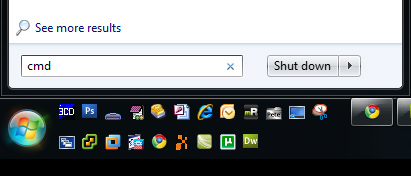

5. This fixed mine, however if yours persists then drop to command line again (whilst logged in as an administrator or click Start > type in cmd > Press CTRL+SHIFT+Enter).

6. Run the following command;

7. Reboot the PC.

Related Articles, References, Credits, or External Links

NA